Optimization treasure trove: A strategic paradigm in advanced signal processing

Published: March 31, 2010

The details of research

Ever since Johann Carl Friedrich Gauss discovered the innovative method of least squares around 1794, most signal processing challenges have been tackled by translating each challenge into certain ‘constrained optimization problems’. Such problems involve minimizing a ‘cost function’ over a constrained subset of a vector space, and over the years a great deal of effort has been devoted to searching for smarter translations that could yield more accurate results.

Now, Isao Yamada and his colleagues at Tokyo Tech's Department of Communications and Integrated Systems propose a pair of powerful mathematical ideas applicable to many so-called ‘knotty’ constrained optimization problems. These sorts of problems are considered theoretically ideal as a method for translating signal processing problems, but have been left untouched for many years due to the absence of suitable numerical optimization algorithms.

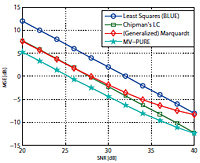

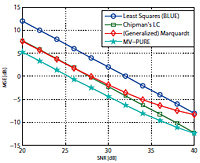

The first idea was developed for a wide range of ‘convexly constrained inverse problems’ as well as ‘convexly constrained design problems’. It has been established based on the so-called hybrid steepest descent method which was originally developed by Yamada for minimizing a convex function over the set of all fixed-points of certain nonlinear mappings. The second idea, named the MV-PURE (Minimum-Variance Pseudo-Unbiased Reduced-Rank Estimator), is specialized for ‘ill-conditioned inverse problems’. It provides the solutions to a hierarchical non-convex constrained matrix optimization problem, where special conditions are imposed to minimize the effects of amplified noise.

References

- K. Slavakis & I. Yamada.

Title of original paper: Robust wideband beamforming by the hybrid steepest descent method.

IEEE Trans. Signal Processing, 55, 4511–4522 (2007)

Digital Object Identifier (DOI): 10.1109/TSP.2007.896252

Department of Communications and Integrated Systems

Department website: http://www.ss.titech.ac.jp/

- T. Piotrowski, R.L.G. Cavalcante & I. Yamada.

Title of original paper: Stochastic MV-PURE Estimator—robust reduced-rank estimator for stochastic linear model.

IEEE Trans. Signal Processing, 57, 1293–1303 (2009)

Department of Communications and Integrated Systems

Department website: http://www.ss.titech.ac.jp/

Digital Object Identifier (DOI): 10.1109/TSP.2008.2011839

. Any information published on this site will be valid in relation to Science Tokyo.