Coexistence with robots and AI in human society is another important area of research for Yoshida. As a principal investigator in the Human-Information Technology Ecosystem project funded by the Japan Science and Technology Agency (JST), she engages in joint research with experts in law and philosophy.

project funded by the Japan Science and Technology Agency (JST), she engages in joint research with experts in law and philosophy.

"In this project, we explore the question of who responsibility should fall upon when an AI or robot with AI causes an accident — the manufacturer, the user, or the AI itself. Insisting that manufacturers bear responsibility would cause a scaling down of the AI and robotics industries as companies seek to reduce risk. In addition, AIs learn and change through interaction with users; therefore, some argue that it would be unfair to make manufacturers completely liable for mishaps."

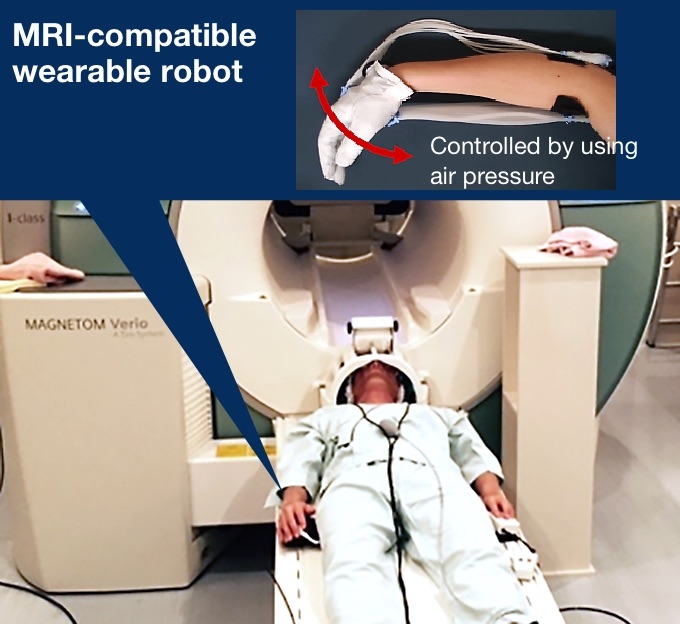

Furthermore, wearable robotics and vehicle autopilot systems create circumstances where humans and AI share control over the same functions. The decisions made by a human operator and that made by an AI may not always match, and this can change the focus of responsibility in the event of an accident.

Fundamentally, we might ask, is it even sensible to hold an AI or robot responsible for a mishap?

"Many matters regarding AI and robots have been discussed. How far would an AI or robot have to develop to be considered legally equal to humans? Is it possible for AIs and robots to exhibit common sense and emotion? Would we even want them to have such abilities? Some argue that determining responsibility is just one of the relatively new ideas from Western society, and that it may be possible to resolve these new social issues regarding AI and robots using methods that do not depend on determining the responsible party."

With the term singularity6 being used so often recently, many issues have emerged regarding the integration of humans and machines, and coexistence with robots and AIs.

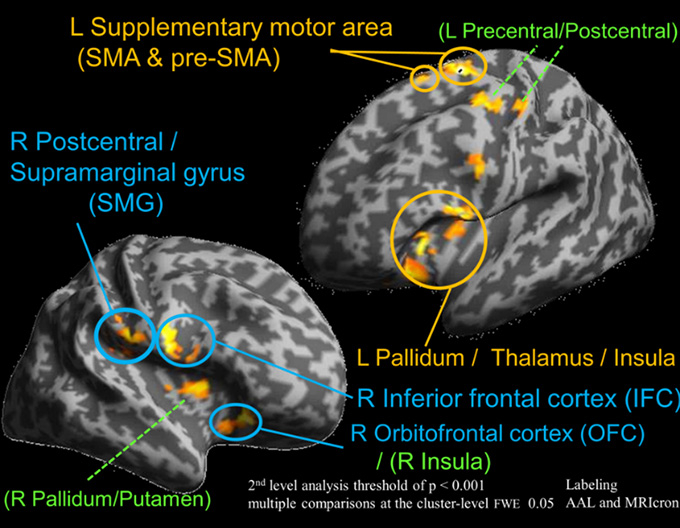

"Addressing these issues," says Yoshida, "requires not only the viewpoints of law, philosophy, and sociology experts, but also a scientific approach to clarify the influences on the human brain and psychology."

At the conclusion of the interview, Yoshida had some advice for students and early-career researchers. "Many young people tend to worry about which field they should enter, liberal arts or science, engineering or physics. I would advise them not to worry about this. Rather, pursue what you are really interested in, what you want to do for the rest of your life. Careers in science are not the only way this goal can be achieved, especially in the area of human sense and perception that I am engaged in. Many graduates have chosen not to pursue careers in science, but have started their own businesses, or become media artists and writers. I would encourage young people to follow their own paths to their goals. Don't limit yourself to small frameworks that narrow your possibilities."

1 Avatar

A graphic representation of the user or some variation thereof.

2 User interface

A mechanism that allows users to interact with computers, software, and systems.

3 Multimodal interface

A concept of combining multiple senses such as vision, hearing, touch, smell, and somatic sense (sense of equilibrium, sense of space, etc.).

4 Crossmodal interface

A concept that involves interactions between two or more different sensory modalities such as vision and hearing, vision and touch, and taste and touch.

5 Brain-machine interface

Interface technology that supports communication between the brain and external devices, such as computers, through the detection and extraction of brainwaves or other brain information and the provision of stimulus to the brain.

6 Singularity

The hypothetical point where AI exceeds human intelligence (technological singularity) or brings about a change in the world.

. Any information published on this site will be valid in relation to Science Tokyo.